Building and training a language model

What does it take to build a language model? To many, the "art" consists

of selecting the right software library, usually written in Python, and

running against a downloaded training corpus. This can be effective

(especially if one has access to the requisite hardware resources) yet

somewhat empty: running a packaged, canned solution offers little by

way of insight or understanding.

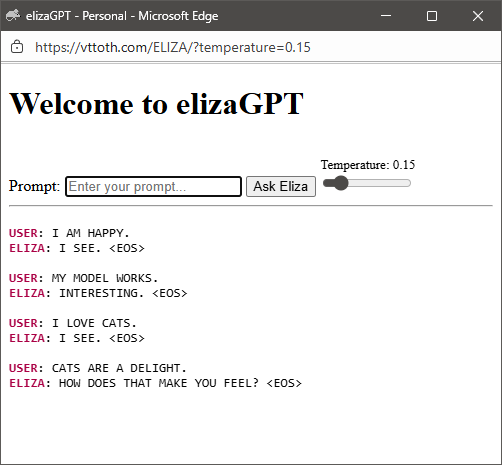

On this page, we present an alternative: Our

elizaGPT,

a custom C++ implementation of a complete multihead transformer

with backpropagation, trained using a corpus that was crafted

using a generated set of ELIZA user queries and responses.

The model we demonstrate here is absolutely tiny: Only 38,848 parameters.

Contrast this with frontier-class Large Language Models, with parameters counts

in the hundreds of billions or more. Yet remarkably, even such a simple

model can achieve linguistic coherence with a properly constructed training

corpus and well-managed learning.

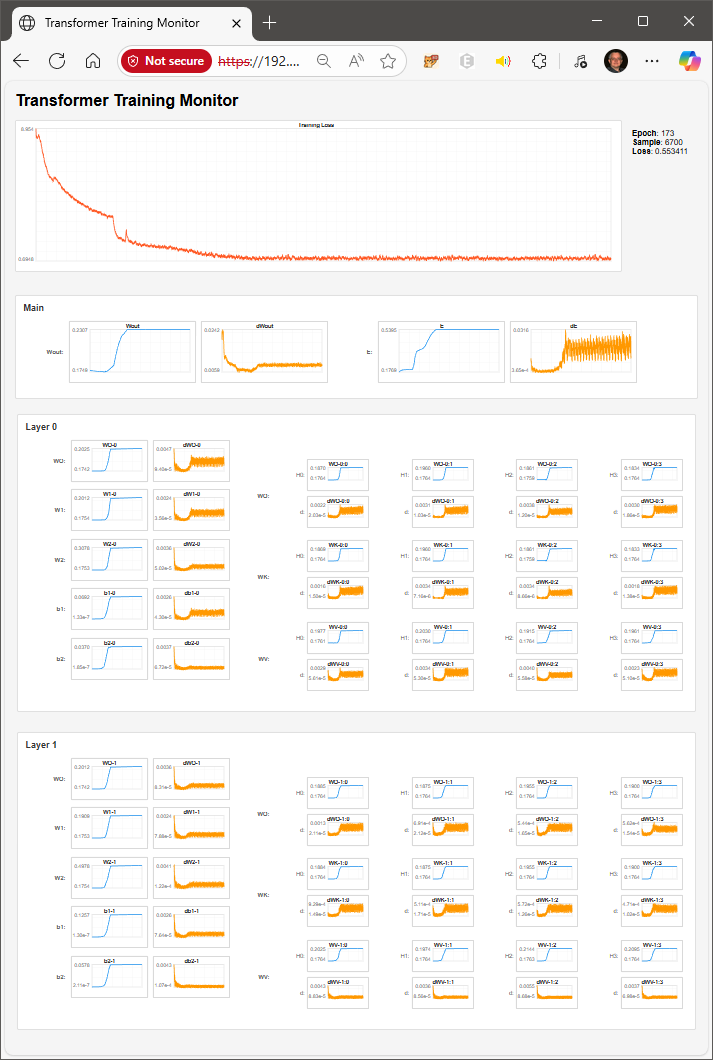

To facilitate the latter, we also constructed a generic dashboard

that shows a near real-time view of key aggregate model parameters and

gradients:

The view provided by this representation makes it possible to respond

proactively during training to changes in model behavior, fine-tuning

training parameters to achieve optimal results.

While this "elizaGPT" experiment on its own right is merely an academic

exercise that offers, at best, some pedagogical value, it also has the

potential to do more. A model this small has a very modest memory footprint

and it can run inference even in scripted programming environments with

no hardware acceleration. (The interactive demonstration presented here

runs entirely in the context of a Web browser, implemented using pure

JavaScript with no acceleration.)

This can lead to practical applications, such

as "micro-AI" models controlling non-playing characters (NPCs) in both

single-user and multi-user computer games. Such NPCs could then routinely

interact not only with players but with each other, in unpredictable,

lifelike ways. They may also be more adept at responding to unexpeced

user actions than more conventional scripted NPCs, even if equipped with

ELIZA-like scripted intelligence.

MIMIC GRU... »